Probablistic Nature of Language Models

Large Language Models (LLMs) are probabilistic, not deterministic, systems. This means that instead of functioning like a database that retrieves fixed, absolute facts, they operate as statistical models that predict the most likely next word (or token) in a sequence based on vast amounts of training data.

-

Fundamental Mechanism: Next-Token Prediction

LLMs generate text by predicting the "next likely word" based on the context of the words that came before it.

Stochastic Output: Because LLMs assign probabilities to a range of potential next words, the same prompt can produce different answers upon repeated queries, as the model "samples" from this probability distribution.

Not a Truth Machine: LLMs learn statistical correlations in language, not facts about the world. A plausible-sounding sentence does not guarantee factual accuracy, as the model is simply selecting words that tend to appear together.

-

Hallucinations and Errors

The probabilistic nature of LLMs is the primary reason for "hallucinations"—confident but incorrect answers.

Overgeneralization: When data is missing, the model fills in the gaps with the most statistically probable information, which may be entirely fabricated.

No Internal Fact-Checking: Because they operate on probability rather than a structured knowledge base, LLMs cannot verify if a statement is true.

-

Key Factors Influencing Probability

Several factors affect the output, shifting the likelihood of different word choices:

- Temperature: A parameter that controls randomness.

- Lower temperature: The model becomes conservative, picking only the highest-probability words (predictable).

- Higher temperature: The model picks lower-probability words, leading to more "creative" or diverse—but less accurate—outputs.

- Context: Adding specific context or instructions increases the probability of relevant, accurate words being chosen, reducing randomness.

- Temperature: A parameter that controls randomness.

-

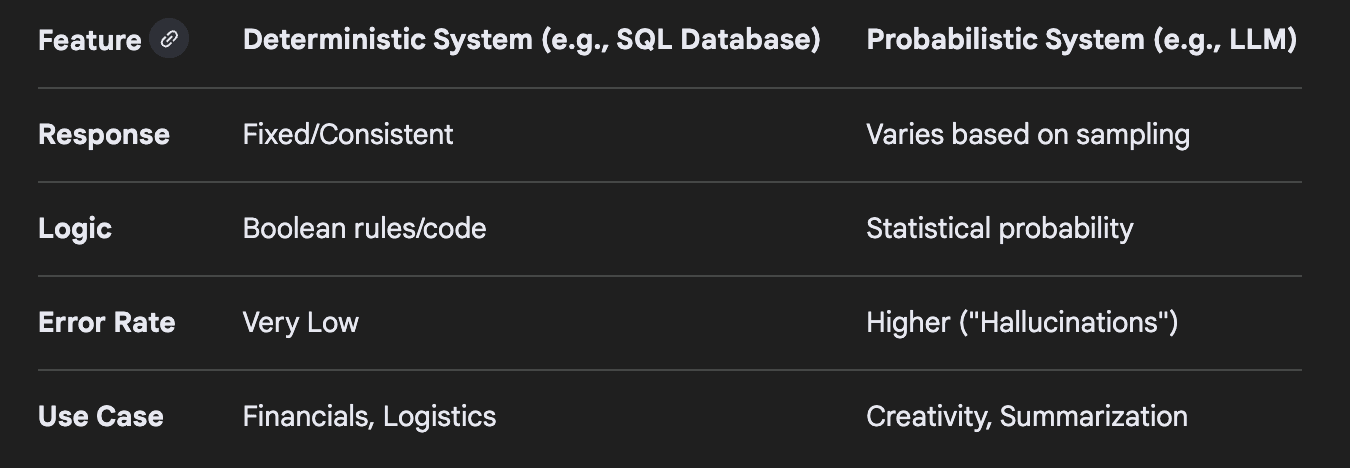

Probabilistic vs. Deterministic Programs

Unlike traditional software (like a calculator or Excel), which is deterministic (providing the same answer every time for a given input), LLMs are probabilistic.

-

Mitigating Probabilistic Risks

To manage this behavior, developers use several techniques to force consistency:

-

Lowering Temperature: Setting to 0 to force the model to select the top prediction consistently.

-

Retrieval-Augmented Generation (RAG): Connecting the LLM to trusted external data sources to retrieve facts before generating a response.

-

Prompt Engineering: Giving explicit instructions about constraints, such as "only use the provided text".

-

In summary, LLMs are effectively "stochastic parrots"—highly sophisticated pattern-matching machines that model the statistical structure of language, rather than understanding or reasoning about the world in a human-like way.